Why ChatGPT Traffic Shows as Direct in GA4 (2026)

Show article contentsHide article contents

- How GA4 actually classifies ChatGPT traffic today

- The four mechanisms that strip the referrer

- Free tier, paid tier, and the referrer signal

- Mobile is the dark half

- When UTMs survive and when they don't

- The compound math: referrer stripping times consent rejection

- The custom channel group, and what it cannot fix

- Measure what is in your Direct bucket

- What Clickport catches that default GA4 does not

- Frequently asked questions

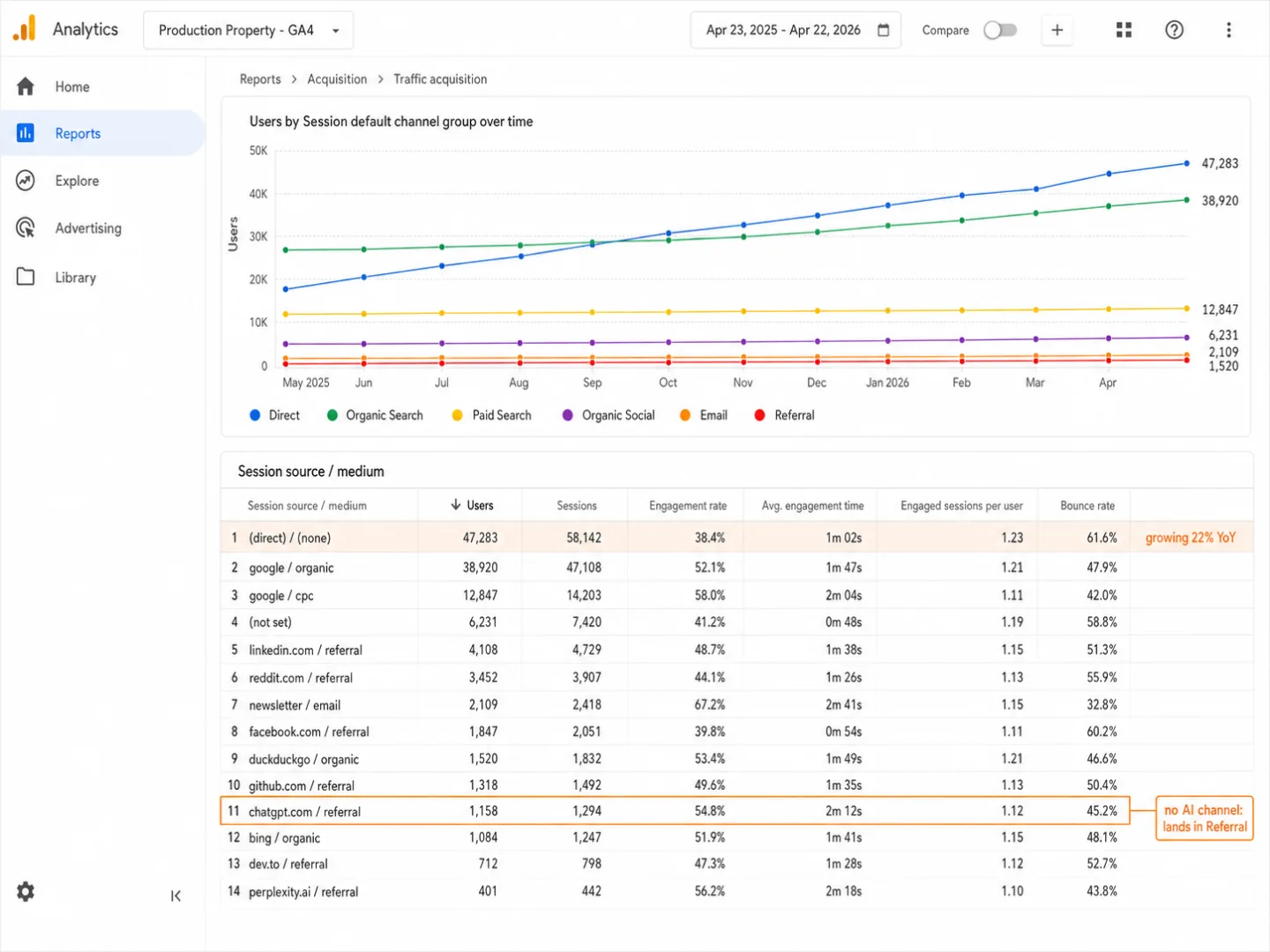

Your ChatGPT traffic isn't invisible in GA4. It's hiding in plain sight, in two buckets that already exist. Across Clickport customer sites in April 2026, about two-thirds of it sat in Referral, mixed in with every blog link and directory mention. The other third landed in Direct: visits with no referrer and no UTM. So why does it feel invisible? Because GA4 never labels it as AI. Both buckets hide it. Each one hides it differently.

- GA4's default channel group has no AI channel as of April 2026. ChatGPT with a referrer lands in Referral, alongside blog links. ChatGPT with no referrer and no UTM lands in Direct. Neither bucket says it's AI.

- Four mechanisms strip the referrer from ChatGPT clicks: the strict-origin-when-cross-origin policy on chatgpt.com, rel=noreferrer on paid-tier inline links, mobile-app WKWebView (iOS) and Custom Tabs (Android), and clipboard copy-paste. Only the first preserves the origin.

- Across 371,847 sessions on Clickport customer sites in April 2026, 35.7% of AI-classified traffic arrived UTM-only, with no HTTP referrer. ChatGPT accounted for 76% of all AI Search sessions in that dataset.

- Google's custom channel group documentation added an AI Assistants regex example in July 2025, but it is user-configured, not default. Applied retroactively, it pulls the referrer-bearing two-thirds out of Referral. It cannot recover the no-signal third from Direct.

- On EU sites, consent rejection stacks on top of referrer stripping. Google's 2021 Consent Mode announcement claimed modeled recovery of 'more than 70%' of lost ad-click-to-conversion journeys. etracker's 2025 consent benchmark puts compliant-banner rejection around 60%. The compound effect for illustrative 100 ChatGPT visits on an EU site is roughly one in six fully attributed.

How GA4 actually classifies ChatGPT traffic today

GA4's default channel group has 18 channels. None of them are AI. That's the whole problem in one sentence. The rules aren't broken and nothing is hidden. They do exactly what Google documents. There just isn't a rule that treats AI as its own source.

So where does a ChatGPT visit go? It depends on what the visit carries. A visit with a referrer header lands in Referral, source chatgpt.com, medium referral. It sits right next to directory listings, blog posts, the odd forum link. A visit carrying only a UTM parameter (utm_source=chatgpt.com, no utm_medium) falls through the Referral and Organic rules and ends up in Unassigned. A visit with neither signal goes to Direct. That last pile is the biggest one.

Here's the Direct rule, word for word from Google's help center: "Source exactly matches '(direct)' AND Medium is one of ('(not set)', '(none)')." In plain English: if GA4 can't tell where the visit came from, it calls it Direct. It makes no difference whether the visitor typed your URL, clicked a bookmark, or tapped a link inside the ChatGPT iOS app. No signal, no distinction.

Google did add a custom-channel example for AI assistants to its documentation in July 2025. The example is a regex you set up yourself, not a default channel. Out of the box, GA4 still has no AI category. PPC Land called it "the first time the platform has officially recognized artificial intelligence tools as distinct traffic sources." That's generous. Adding a regex example to a help page is not the same as shipping a channel.

What does that look like in a real setup? Two patterns. If your Direct bucket has climbed quarter after quarter and nothing changed about how people type in your domain, ChatGPT mobile is a fair suspect for the new volume. If your Referral bucket holds the same traffic under chatgpt.com / referral but nobody broke it out in your dashboard, the traffic is there. You just can't see it without drilling in. Both cases come back to the same cause. No AI channel, so AI traffic spills wherever the default rules send it.

One clean line from Dana DiTomaso at the Analytics Playbook: "I guarantee you it's already in your GA4. It's just hiding."

The four mechanisms that strip the referrer

ChatGPT doesn't strip the referrer in one way. Four separate behaviors delete or trim the signal at different points in the chain. Three remove it completely. The fourth keeps only the origin.

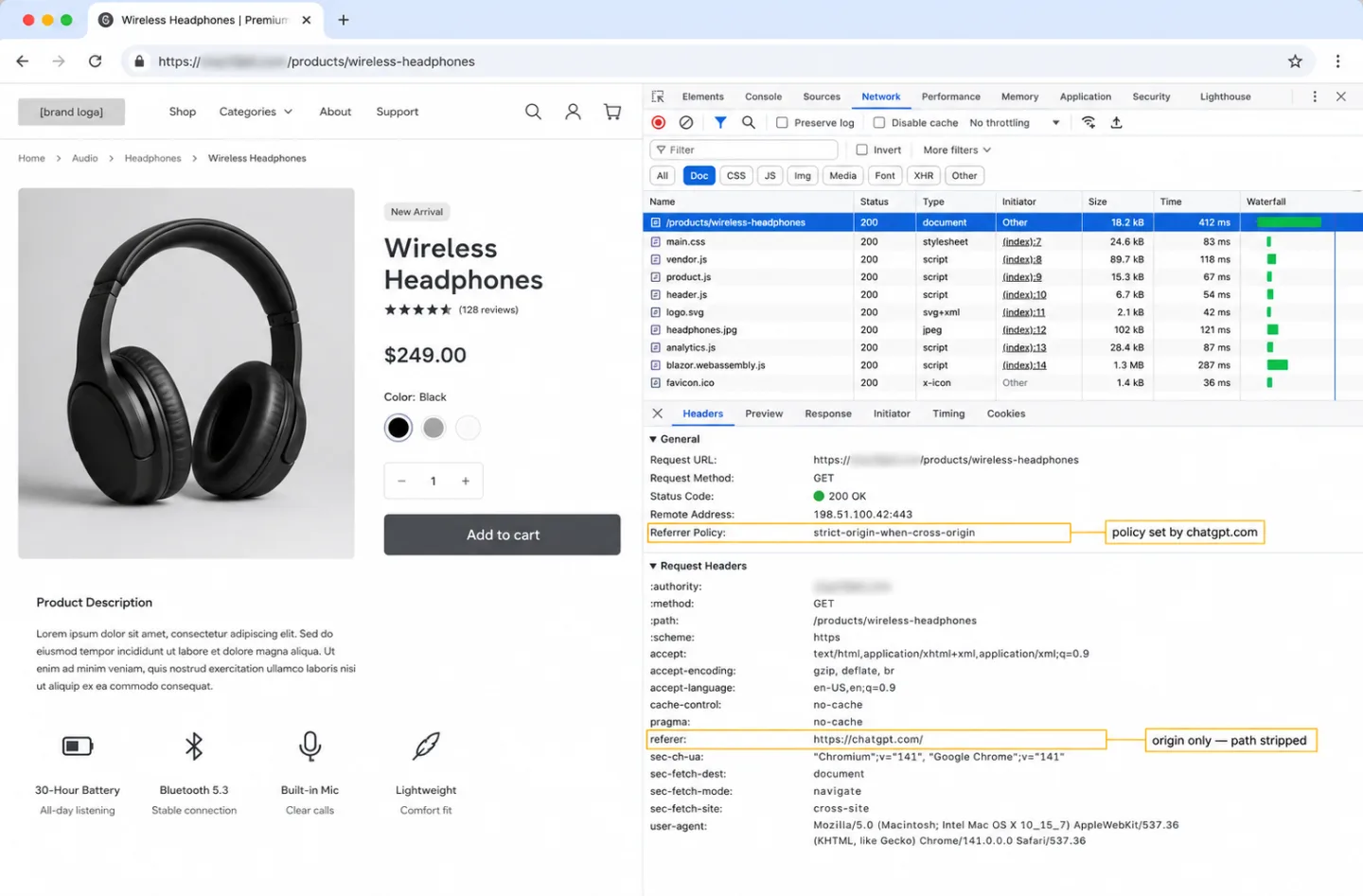

The first is chatgpt.com's Referrer-Policy header. Check the response headers and you'll see referrer-policy: strict-origin-when-cross-origin. That's the W3C default since Chrome 85 in August 2020. What it means in plain words: when someone leaves chatgpt.com for your site, the browser sends only the origin (https://chatgpt.com/) with no path attached. Your analytics can't tell whether the visit came from a chat reply, a shared conversation, or a search citation. And if your site still runs on HTTP rather than HTTPS, the browser drops the referrer altogether.

The second is rel="noreferrer" on anchor tags. Per the WHATWG HTML standard, this attribute tells the browser to drop the Referer header completely, no matter what the page-level policy says. Ahrefs's investigation found the behavior splits by tier. Paid ChatGPT accounts put noreferrer on inline links inside chat replies. Free accounts don't. Source-citation links at the bottom of a reply get a utm_source=chatgpt.com parameter instead. OpenAI has never said why the split exists.

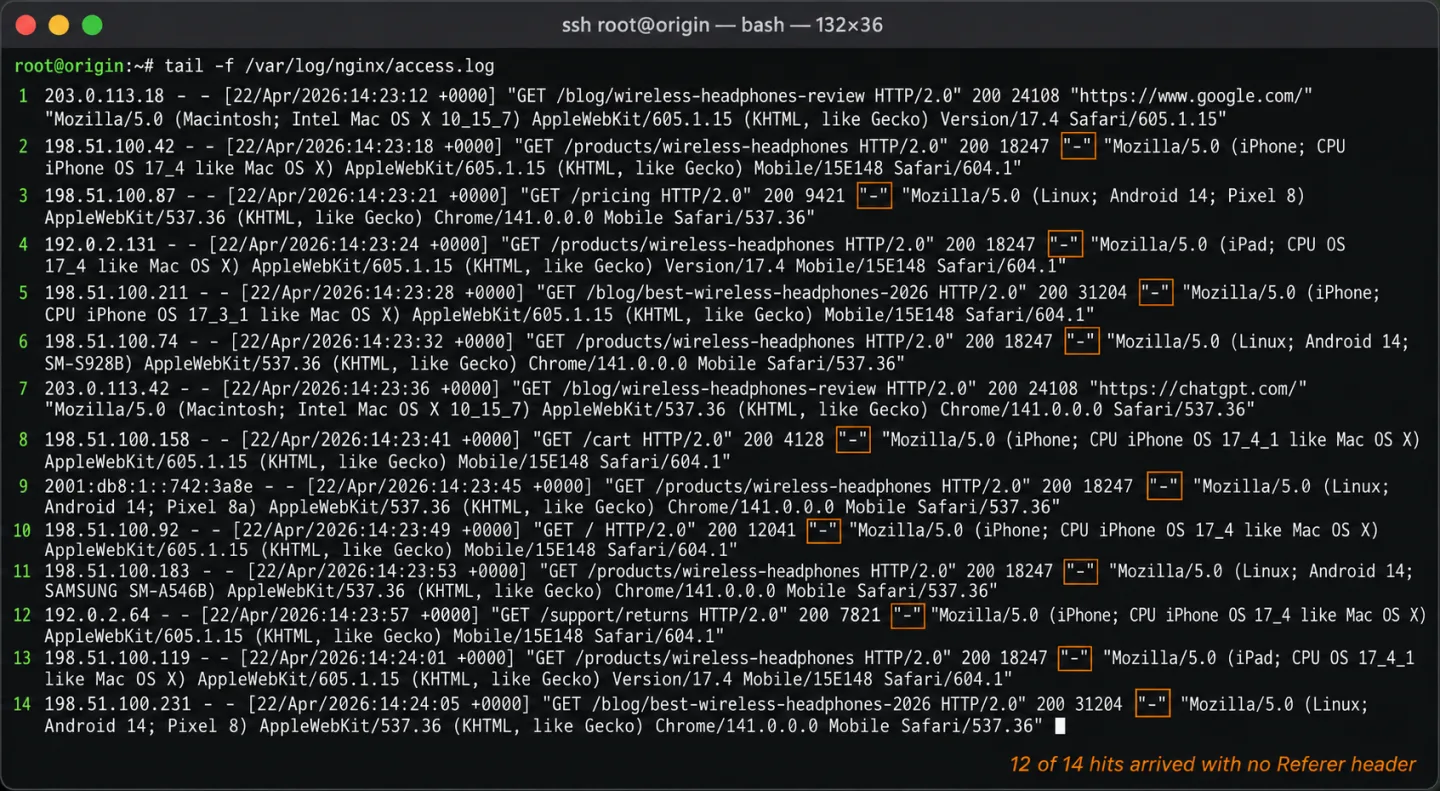

The third is mobile. The ChatGPT iOS app opens external links in WKWebView, Apple's embedded-browser component. When the link opens, the Referer header doesn't reach the destination. You can see it documented in a GitHub issue on OpenAI's own apps-sdk-examples repo, where the missing Referer broke YouTube embeds. Android does the same thing through in-app WebView or Chrome Custom Tabs. Both drop the Referer during the handoff. As of early 2026, the ChatGPT mobile apps had roughly 68 million combined iOS and Android monthly downloads. All of that volume reaches your site looking identical to someone who typed your URL by hand.

The fourth is clipboard copy-paste. When someone copies a URL from a ChatGPT reply and pastes it into the address bar, the browser sends no Referer. This isn't a setting anyone chose. It's the HTTP spec: per RFC 9110, the Referer is the "URI of the resource from which the target URI was obtained." Typed or pasted text has no referring URI. The one thing that survives a copy-paste is the utm_source parameter on a citation link, because the UTM is part of the URL string itself. That's why a UTM holds up where a Referer header doesn't.

| Context | Referer header | UTM added | GA4 bucket |

|---|---|---|---|

| Desktop web, citation link | https://chatgpt.com/ | utm_source=chatgpt.com | Referral |

| Desktop web, inline link, free tier | https://chatgpt.com/ | none | Referral |

| Desktop web, inline link, paid tier | none (rel=noreferrer) | none | Direct |

| iOS app tap-through | none (WKWebView) | none | Direct |

| Android app tap-through | none (Custom Tabs) | none | Direct |

| Copy-paste of citation URL | none (clipboard) | utm_source=chatgpt.com | Referral or Unassigned |

| Copy-paste of inline URL | none | none | Direct |

None of these behaviors belong to ChatGPT alone. Mobile apps across the board strip referrers. rel="noreferrer" is standard practice. The W3C default policy applies to every HTTPS site. What's specific to ChatGPT is the stack: one platform with very high traffic, a paid tier that adds noreferrer, and a mobile app with tens of millions of monthly users. Put those together and the signal disappears in four different ways at once.

Free tier, paid tier, and the referrer signal

There's a pattern that shows up across practitioner analyses but that OpenAI has never documented. Free ChatGPT users have the referrer stripped from inline links in chat replies. Paid Plus and Team subscribers keep the referrer on the same kind of links. Source citations, the numbered references at the bottom of a reply, get UTMs whatever the tier.

So the outcome depends on who reads you. A B2B SaaS publisher whose readers lean toward paid ChatGPT subscribers will see real traffic land correctly as chatgpt.com / referral in GA4, because the inline links carry the Referer header. A consumer site with mostly free users will see close to none of it. Same reply, different numbers for different publishers.

Rand Fishkin put the ceiling on this cleanly in January 2025: "places that no longer like to refer traffic like LinkedIn, like a Reddit, like a ChatGPT, like a Perplexity. They don't send direct visits. What they do is influence people to buy." So the gap isn't a bug OpenAI is going to fix. The platforms value their own visibility over your ability to measure it. That's the design, not the defect.

And it isn't an OpenAI thing either. Facebook does it with l.facebook.com link shims. LinkedIn does it with lnkd.in. Reddit's mobile app strips referrers through Chrome Custom Tabs. SparkToro's 2023 controlled experiment ran 1,113 visits across 16 platforms and found that every single visit from TikTok, Slack, Discord, Mastodon, and WhatsApp, all 100% of them, got misattributed as Direct in GA4. ChatGPT isn't the first platform to do this. It's the first one most marketers have noticed.

Mobile is the dark half

The ChatGPT mobile apps send traffic that no referrer-based method can attribute. On iOS, the app uses WKWebView, Apple's embedded-browser engine. On Android, it uses Chrome Custom Tabs or an in-app WebView. Both drop the Referer header when the user taps an external link. No UTM gets added. Your site gets a bare GET request from an iOS or Android user-agent, and it looks exactly like someone who typed the URL in by hand.

Volume is the point. The ChatGPT iOS app has been the top free app on the App Store over and over since launch. Combined iOS and Android monthly downloads run into the tens of millions. For most publishers, mobile is the biggest slice of ChatGPT-driven traffic. It's also the biggest slice nobody can attribute.

ChatGPT Atlas, OpenAI's Chromium-based browser released October 21, 2025, adds yet another case. In the GA4 Browser dimension, Atlas reports as Chrome 141, which means you can't tell it apart from plain Chrome. Open a link from inside the ChatGPT interface in Atlas and the referrer is stripped, same as the mobile app. Use Atlas as a normal browser, land on your site from chatgpt.com through its search surface, and the session does show as chatgpt.com / referral. But Atlas keeps its cookies apart from the user's main Chrome profile, so returning visitors show up as new ones in GA4. Dana DiTomaso documented this within days of launch. So Atlas pads your new-user count and still drops a share of its sessions into Direct.

Dan Taylor's MarTech analysis lined Atlas up against Perplexity's Comet browser, which shipped earlier. Comet passes the referrer cleanly. Sessions from Comet land as perplexity.ai / referral in GA4. Atlas doesn't. Two AI browsers, two architectures, two different answers at the analytics layer.

More AI browsers are coming, and each one adds its own edge cases. Every new browser is a new referrer behavior to chase.

When UTMs survive and when they don't

OpenAI has shipped exactly two attribution improvements worth the name since launch. Both did the same thing: add UTM parameters to links that had none. Neither one covers most ChatGPT traffic.

On October 31, 2024, OpenAI launched ChatGPT Search. Citation links in Search-mode replies started carrying utm_source=chatgpt.com. For the first time, a slice of ChatGPT traffic had a machine-readable tag that didn't depend on the referrer header. The UTM survives mobile apps, paid-tier noreferrer, and clipboard copy-paste, because it lives in the URL string itself, not in an HTTP header. UTMs travel. Referrers don't.

On June 13, 2025, OpenAI extended UTM parameters to the "More sources" section of replies. Glenn Gabe flagged it on X within hours: "Heads-up, this should jump the traffic levels in GA4 and other analytics tools for ChatGPT. That's because ChatGPT finally added utm parameters for 'More' sources versus just the citations. Those links used to be standard links without utm parameters." Read that the other way round: every traffic graph Gabe drew before June 13, 2025 undercounted real ChatGPT referral volume by whatever share the "More" section carried, and that share went unmeasured.

What's still untagged: the conversational inline links buried in paragraph text, on both free and paid tiers, every mobile app link open on either OS, and anything produced outside Search mode. That's most of the links ChatGPT writes.

In our April 2026 Clickport dataset, 867 of 2,619 AI Search sessions arrived UTM-only with utm_source=chatgpt.com and no HTTP referrer. That's 33.1%, roughly one in three AI Search sessions on our platform with no referrer header at all. Without the UTM to save them, GA4 would have dumped every one into Unassigned. This is the clipboard-paste-from-citation pattern. Someone copies a cited URL out of a ChatGPT reply and opens it somewhere the referrer gets lost, and the UTM in the URL is the only thing that makes it through. Another 67 sessions arrived UTM-only for perplexity. None for Claude, Gemini, Copilot, Kagi, or DeepSeek. Those platforms don't auto-append UTMs.

The rule of thumb is simple. If you want to trace inbound AI citations, add your own UTMs to the URLs you put into prompts. You can't tag the links ChatGPT pulls from its own index. Those carry whatever ChatGPT chose to attach, and you have no say in it.

The compound math: referrer stripping times consent rejection

On EU sites, the two problems stack. Two separate losses hit the same traffic. The first is referrer stripping, and it's global. The second is cookie-banner rejection, and it's heaviest in the EU.

Start with 100 ChatGPT visits to an EU publisher running GA4. About 70 of them show up with no referrer header at all: Loamly's February 2026 analysis of 20,428 AI visits found 14,413 of them, 70.6%, arrived without referrers. Take that as the rough order of magnitude, not a hard benchmark. Loamly sells AI-traffic detection, so it has a reason to want that number to look big. And the 70.6% comes from the AI subset of its own customers, not from a representative sample.

That leaves 30 visits with a referrer. Then consent rejection takes a cut before GA4 can write them down. etracker's 2025 consent benchmark puts average rejection on compliant EU banners at around 60% of visits. So if 55% of those 30 referrer-bearing visitors accept cookies, GA4 ends up seeing about 16. Out of every 100 ChatGPT visits to an EU site, roughly 16 get fully attributed. The other 84 either sit in Direct or never get counted at all.

Google Consent Mode's modeled conversions claim to win back a share of the rejection loss. The original April 2021 Google announcement said modeling recovers "more than 70% of ad-click-to-conversion journeys lost due to user cookie consent choices." That figure is five years old, and Google hasn't refreshed it since. Modeling also needs at least 1,000 daily consent-declined events before it kicks in. Most sites outside enterprise traffic never reach that floor. For those sites, the rejection loss is just a loss.

Plausible Analytics laid out the practical limit plainly. Modeling can rebuild aggregate conversion counts, but it can't rebuild user journeys, pages visited, or source attribution for the people who declined. So the Direct bucket isn't a rescue for a rejected-consent ChatGPT visitor. It's a dead end.

Outside the EU, rejection rates are a fraction of what they are inside it. CCPA and CPRA opt-out in California runs from single digits to the low teens. For US-heavy sites, referrer stripping does almost all the damage and consent does very little.

If the gap is real, the next question is what it costs you. Our analysis of what AI visitors are actually worth walks through the conversion economics behind these numbers, and why the missing share hits your revenue reporting, not just your channel reporting. For a rough read on how much GA4 misses across the board, with AI referrer stripping piling on top of the four general drivers, try the GA4 Data Loss Estimator.

The custom channel group, and what it cannot fix

GA4 lets you build a custom channel group in Admin → Data settings → Channel groups. Free properties get two custom groups. And the group applies backwards: save the rule and historical sessions reclassify, so you keep your past data.

Here's the regex Google suggests in the AI Assistants example, the one added to the docs in July 2025:

^.*ai|.*\.openai.*|.*chatgpt.*|.*gemini.*|.*gpt.*|.*copilot.*|.*perplexity.*|.*google.*bard.*|.*bard.*google.*|.*bard.*|.*.*gemini.*google.*$

It's a starting point, not a finished rule. It misses Claude, Phind, Kagi, You.com, DeepSeek, Grok, Andi, and Meta AI, and as of October 2025 it misses ChatGPT Atlas as a distinct browser signal too. Here's a fuller April 2026 version:

(chatgpt\.com|chat\.openai\.com|perplexity\.ai|claude\.ai|gemini\.google\.com|copilot\.microsoft\.com|phind\.com|kagi\.com|you\.com|deepseek\.com|grok\.com|meta\.ai|andi\.ai)

Set the match condition to Source matches regex. Put the rule above the default Referral rule so AI sessions pull out before Referral can grab them. Don't require a UTM medium. The AI platforms that do set UTMs set utm_source only, with no utm_medium, so requiring a medium would knock those sessions straight back into Unassigned.

Because the reclassification runs backwards, this is a one-time setup that pays off in both directions. Build it in April 2026 with 12 months of history behind you, and the whole year re-buckets cleanly into a new AI channel.

What the custom channel can't fix is the one-third of AI traffic that never sends a referrer and never carries a UTM. That share sits in Direct for good. No regex can reach it. There's nothing to match on. Custom channel groups pull the high-signal AI traffic out of Referral. They leave the low-signal AI traffic in Direct.

A quick note on Google's own AI products. Google AI Mode launched with rel="noreferrer" on its outbound citation links, which wiped the referrer entirely and dropped those clicks into Direct, the same pattern as ChatGPT. Lily Ray at Amsive called it "Not Provided 2.0," a nod to the 2011 keyword-data loss. Google fixed it by May 31, 2025: AI Mode clicks now pass google.com as the referrer and turn up in Search Console's web search performance report. In GA4 they look just like regular organic search. The signal arrives. It just lands in the wrong bucket.

Measure what is in your Direct bucket

If your Direct channel has grown out of proportion and nothing about your brand activity explains it, ask how much of that growth follows your AI visibility. GA4 alone won't hand you the answer. The traffic you most want to count is the traffic your analytics can't see. So you triangulate.

Watch three numbers over the same window. One: Direct sessions as a share of total, month over month. Two: sessions attributed to any AI platform (ChatGPT, Perplexity, Claude, Gemini) in whatever form your setup happens to catch them, whether that's Referral, a custom channel, or a UTM filter. Three: brand-name organic search impressions from Google Search Console, the closest stand-in for the people who meet you in an AI conversation and then type your brand name into Google.

If the first number climbs and the other two climb with it, AI discovery is almost certainly part of what's piling into Direct. If the first climbs and the other two stay flat, the cause is somewhere else: bot traffic, a campaign that lost its URL tags, a mobile app build that shed its tracking.

Treat the output as a thinking tool, nothing more. The real share of your Direct bucket that's AI depends on who your audience is, how much of them is on mobile, and whether your brand shows up in ChatGPT replies in the first place.

What Clickport catches that default GA4 does not

Clickport sorts every visit into one of 16 channels, and one of them is a dedicated AI Search channel. The classifier knows 13 AI platforms by referrer or utm_source, runs before the Organic Search rule so AI traffic pulls out cleanly, and works the same way on every site. No regex to maintain.

In our April 2026 data across 371,847 sessions on Clickport customer sites, AI Search came to 2,619 sessions, or 0.7% of the total. ChatGPT drove 1,994 of those, 76% of all the AI traffic we saw. That points the same way as Conductor's 2026 benchmark, which puts ChatGPT at 87.4% of AI referrals across a much bigger 13,770-domain sample. Whatever your AI Search share turns out to be, ChatGPT is most of it. Of our 2,619 sessions, 1,684 came in with a referrer, the slice a custom GA4 channel group would recover. The other 935 came in UTM-only with no referrer, the slice that lands in Unassigned in GA4 unless your utm_medium handling is set up right.

On the subset of Clickport customer sites with conversion goals set up (n=87 AI Search sessions and 1,184 Direct sessions over 30 days), AI Search converted at 20.7% and Direct at 11.1%. The sample is small and self-selected, so read it as a direction, not a benchmark. It lines up with Seer Interactive's ChatGPT case study, which measured 15.9% on a single B2B client, and Further's aggregate LLM data of roughly 18% across their client base. So the AI traffic you can measure converts close to twice as well as Direct. Which makes it sting more, not less, that most of it hides in Referral and Direct.

One thing worth watching alongside this: the AI visibility scoring tools. If you're weighing up Profound, Peec, Otterly, or anything like them for brand-mention tracking, read our critique of AI visibility scores as vanity metrics before you buy one. Counting real visits to your site beats counting prompts you wrote yourself.

Here's what Clickport doesn't do. It can't magically see traffic that shows up with no referrer and no UTM. That traffic is still Direct in Clickport, same as anywhere else. The real win sits on the consent side. Clickport is cookieless. No banner, no rejection, no consent-mode modeling. Every visit gets counted the same way, so the "fully attributed on an EU site" share climbs to roughly 30% of actual visits instead of the compound 16%. I can't unstrip a referrer. Nobody can. What I can do is stop you bleeding the rest of it to a consent banner on top.

If your Direct bucket is growing and you want to see what's inside it, do try Clickport free for 30 days. One script tag, no credit card. Your first AI Search session shows up in the Sources panel the moment someone arrives from ChatGPT. Start here.

Frequently asked questions

Why doesn't GA4 have a native AI channel in 2026?

Google hasn't said. Here's what the public record shows: Google folded AI Overviews and AI Mode traffic into Organic Search, because both come from google.com referrers, and it added an AI assistants example to the Custom Channel Groups docs in July 2025. To build an AI channel into the defaults, Google would have to either decide which platforms count or write a match rule on more than just the referrer domain. Neither has shipped.

Can I back-fill historical sessions that were miscategorized as Direct?

Only the subset that carried a UTM or a referrer. GA4's custom channel groups apply retroactively, so sessions with chatgpt.com in the referrer or utm_source field reclassify into your new AI channel back through the property's history. Sessions that arrived with no signal at all stay in Direct for good. There's no source/medium data sitting there to reclassify.

Does adding UTMs to links I share in ChatGPT actually help?

Yes, for the links you share yourself. Paste a link into a ChatGPT prompt, get it back in the reply, and your UTM rides along with the URL so the click gets attributed. It does nothing for the links ChatGPT pulls from its own index mid-reply. Those carry whatever UTM OpenAI chose to attach, which since June 2025 means utm_source=chatgpt.com on citation and "More sources" links, and nothing at all on the inline conversational ones.

Will ChatGPT Atlas inflate my new-user count in GA4?

Probably yes, for any returning visitor who switches to Atlas. Atlas doesn't import Chrome cookies when it sets up a profile, so each existing visitor shows up as a fresh GA4 client ID the first time they come back through it. The distortion fades as Atlas builds up its own cookies, but the first months after people adopt Atlas will overstate new users and understate returning ones.

Is referrer data recoverable server-side?

Not if the browser never sent it. The HTTP Referer header comes from the client. If the browser holds it back, through Referrer-Policy, rel=noreferrer, or in-app browser behavior, your server-side code has no Referer to log. Server-side tracking solves real problems like consent blocks and ad-blocker interference. Recovering a stripped referrer isn't one of them.

Does this problem apply to Plausible, Fathom, and Matomo too?

Yes, for the no-signal share. Any analytics tool that leans on the HTTP Referer header and URL parameters sees the same Direct bucket for referrer-stripped, UTM-less visits. The difference is in the default channel grouping. Some privacy-first tools ship an AI channel in their defaults, which peels the high-signal AI traffic away from regular referrals without a custom regex. On the consent side, cookieless tools step around consent rejection, which shrinks the compound loss for EU sites. But none of them fix the deeper problem of traffic that arrives carrying no signal at all.

Your Direct bucket will keep growing. Some of that growth is the ordinary kind: type-ins, bookmarks, broken chains. Some of it is AI traffic that never carried a referrer. The way to tell them apart isn't to fix Direct. It's to catch the signal before it disappears into Direct, and route that traffic to a channel that explains itself.

Comments

Loading comments...

Leave a comment