How to A/B Test Your Website Without Paying for Tools

![A screenshot of Visual Studio Code with the file assignment.js open in a tab. The editor shows the getVariant function from line 1 to line 18: a comment '// 20-line A/B test variant assignment', the function definition that uses localStorage.getItem and localStorage.setItem, a try/catch block handling Safari private browsing, and a final call site 'var v = getVariant("hero_cta", ["control", "treatment"]);'. The left file tree shows assignment.js highlighted with sibling files index.html, styles.css, tracking.js, package.json, README.md. An annotation reads 'The entire A/B testing engine. 15 lines of vanilla JavaScript. No SDK, no build step, no vendor account.' A second annotation reads 'Handles Safari private browsing where localStorage.setItem throws SecurityError. Visitor gets a random variant per page load. Rounding error over a 14-day test.' A third annotation reads 'One function call. Returns control or treatment. Persisted to localStorage for return visits.'](/blog-assets/ab-testing-without-tools-hero.webp)

Show article contentsHide article contents

- What Google Optimize left behind

- What an A/B test actually is

- The 20-line JavaScript split

- Tracking variants with custom events

- How many visitors you actually need

- When to stop (and why you should not peek)

- Is your result real?

- The privacy question nobody asks

- What paid tools give you (that this approach does not)

- Frequently asked questions

- The three-line summary

I spent two weeks evaluating VWO, Optimizely, and Convert before realizing I was overcomplicating a problem that takes 20 lines of JavaScript to solve. Google Optimize is gone. The cheapest replacement costs $299/month. But a simple A/B test is just random assignment, variant display, and conversion measurement. You already have two of those three.

- Google Optimize shut down in September 2023. It dominated the free A/B testing market. The cheapest dedicated replacement (Convert) costs $299/month. GrowthBook and Statsig offer free tiers but require technical setup.

- A working A/B test needs three things: random assignment, variant display, and conversion measurement. The first two take 20 lines of JavaScript. The third is a custom event in your analytics tool.

- At 95% confidence and 80% power, a site with a 3% conversion rate needs ~28,000 visitors to detect a 20% improvement. At 500 daily visitors, that takes 56 days. Low-traffic sites should test bold changes, not button colors.

- Evan Miller's research shows that checking A/B test results early inflates false positives from 5% to 26%. Pre-calculate your sample size. Don't look until you hit it.

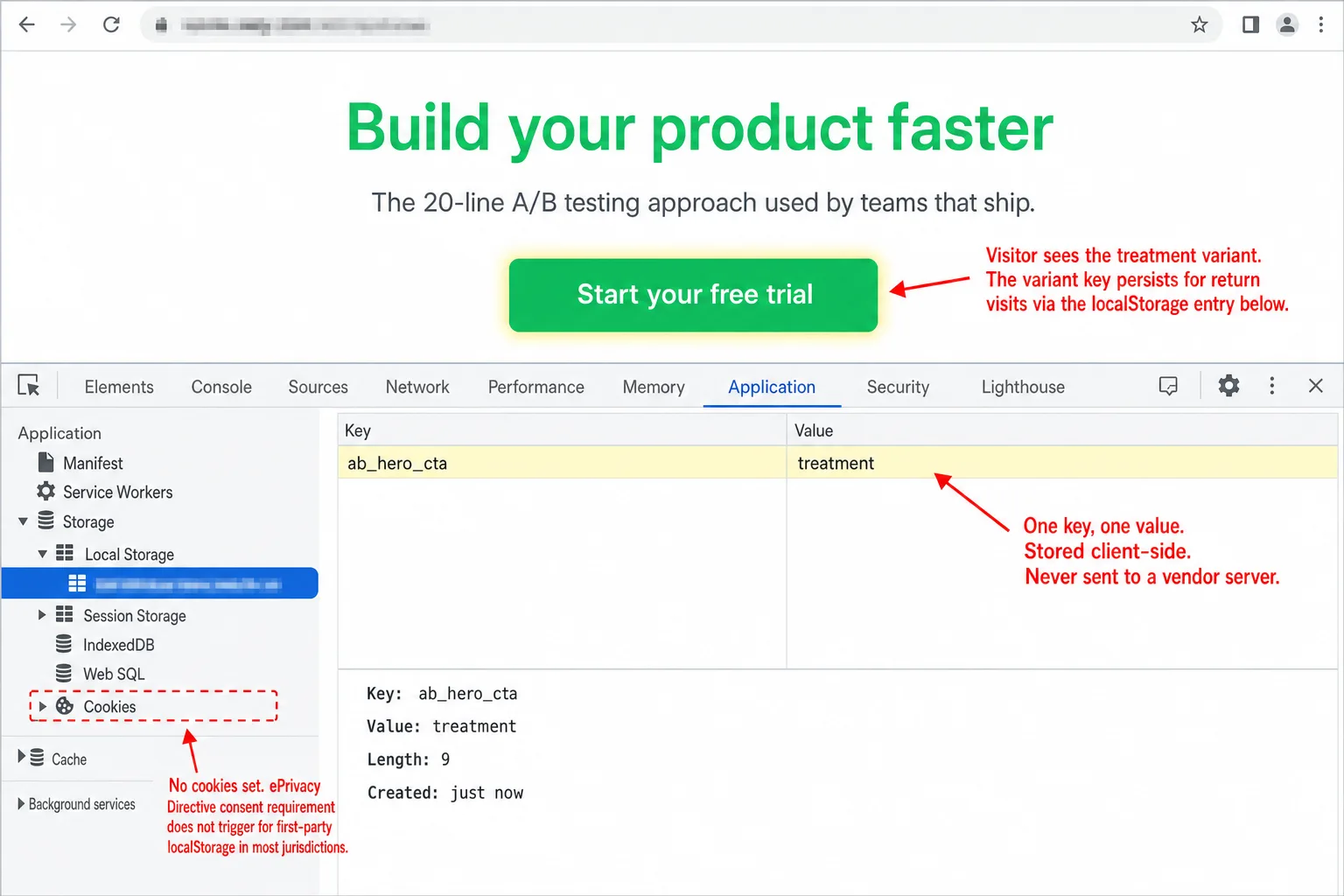

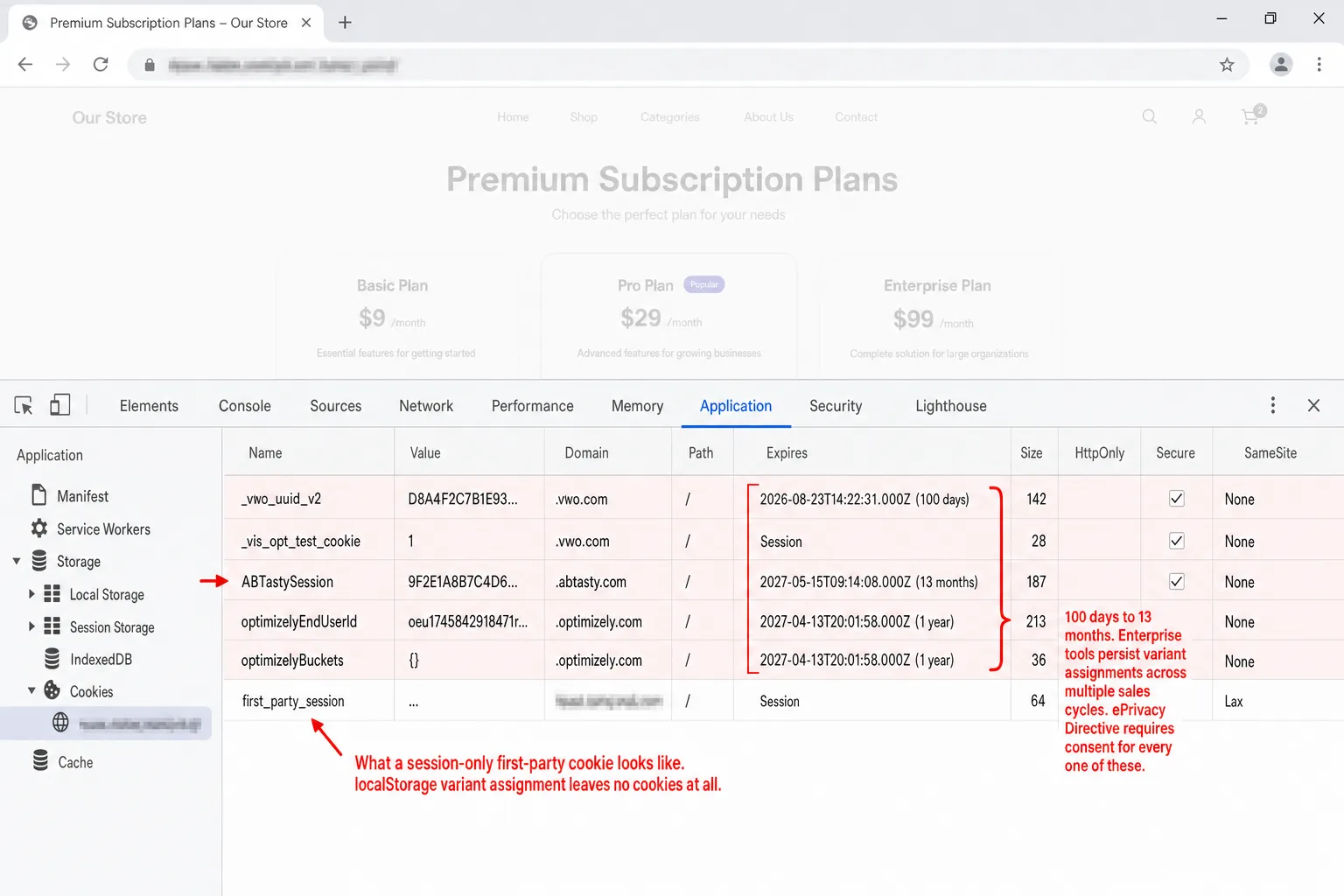

- Enterprise A/B testing tools set cookies with 100-day to 13-month lifetimes and transmit data to vendor servers. Under the ePrivacy Directive, this requires consent. A client-side localStorage approach with no vendor keeps the privacy footprint minimal.

What Google Optimize left behind

Google Optimize shut down on September 30, 2023. It dominated the free A/B testing market, used on over 500,000 websites before the shutdown. Google's suggested replacements were Optimizely, VWO, and AB Tasty.

Here is what those cost:

One Hacker News commenter described the jump after Optimizely's acquisition: "We were a monthly customer... for a few hundred dollars a month. Then they went to the annual cost of $30K+ upfront and ended all monthly options."

Free tiers exist. GrowthBook is open source and genuinely free to self-host. Statsig gives you 2 million events per month for free. PostHog includes A/B testing in its free tier up to 1 million events. But all three require technical setup, SDK integration, and a data warehouse or event pipeline to get real value.

There is a simpler path. If you want to test whether "Start your free trial" converts better than "Get started," you do not need any of these tools. You need 20 lines of JavaScript and an analytics tool that tracks custom events.

What an A/B test actually is

Strip away the jargon and an A/B test has three components:

If your analytics tool tracks custom events, you already have step 3. Steps 1 and 2 are vanilla JavaScript. The Obama 2008 campaign tested 24 combinations of buttons and images on their splash page and found a winner that lifted signups by 40.6%, translating to an estimated $60 million in additional donations. A/B testing works. The question is whether you need to pay $300/month to do it.

The 20-line JavaScript split

Here is a complete, production-ready variant assignment function. It uses localStorage to persist the assignment so each visitor always sees the same version:

function getVariant(testId, variants) {

var key = 'ab_' + testId;

var variant;

try {

variant = localStorage.getItem(key);

if (!variant || variants.indexOf(variant) === -1) {

variant = variants[Math.floor(Math.random() * variants.length)];

localStorage.setItem(key, variant);

}

} catch (e) {

// Safari private browsing or storage disabled

variant = variants[Math.floor(Math.random() * variants.length)];

}

return variant;

}

That is the entire assignment engine. The try/catch handles Safari private browsing, where localStorage.setItem() throws a SecurityError. In that case, the visitor gets a random assignment on every page load. For a 1-2 week test, this is a rounding error.

Preventing the flash of original content

The biggest technical pitfall in client-side A/B testing is FOUC: the visitor sees the original version for a split second before JavaScript swaps it. The fix is a synchronous inline script in <head> that runs before the browser paints anything:

<head>

<script>

(function() {

try {

var v = localStorage.getItem('ab_hero_cta');

if (!v) {

v = Math.random() < 0.5 ? 'control' : 'treatment';

localStorage.setItem('ab_hero_cta', v);

}

document.documentElement.setAttribute('data-ab-hero', v);

} catch(e) {

document.documentElement.setAttribute('data-ab-hero', 'control');

}

})();

</script>

</head>

Then use CSS attribute selectors to style each variant. No DOM manipulation needed:

[data-ab-hero="control"] .hero-title { }

[data-ab-hero="treatment"] .hero-title { color: #2cc96b; }

[data-ab-hero="control"] .cta-button::after { content: "Get started"; }

[data-ab-hero="treatment"] .cta-button::after { content: "Start your free trial"; }

The variant is baked into a data-* attribute on <html> before the browser renders anything. Zero flash, zero DOM thrashing.

QA override

Add a URL parameter override so you can preview each variant without clearing localStorage:

// Add to the getVariant function

var params = new URLSearchParams(location.search);

var forced = params.get('ab_' + testId);

if (forced && variants.indexOf(forced) !== -1) return forced;

Now ?ab_hero_cta=treatment forces the treatment variant. Share the URL with your team to review before going live.

Tracking variants with custom events

Assignment and display are handled. Now you need measurement. Fire a custom event on page load to record which variant the visitor saw, and fire another event when they convert.

var variant = getVariant('hero_cta', ['control', 'treatment']);

// Record exposure

clickport.track('AB Test', {

test: 'hero_cta',

variant: variant

});

// Record conversion (on the success action)

document.querySelector('.cta-button').addEventListener('click', function() {

clickport.track('Signup', {

test: 'hero_cta',

variant: variant

});

});

The custom events API accepts up to 30 properties per event, each stored as a key-value pair. Use one event name (AB Test) with a variant property rather than separate event names per variant. The dashboard's property breakdown shows per-variant counts automatically.

To track revenue, add a third parameter:

clickport.track('Purchase', {

test: 'hero_cta',

variant: variant

}, { amount: 49.99, currency: 'USD' });

Then create a Goal that matches the conversion event name. The Goals panel shows conversion counts and rates, which you compare across variants to find your winner.

variant property to see this breakdown.The full implementation, from assignment to conversion tracking, is under 30 lines of JavaScript. No SDK. No build step. No vendor account.

How many visitors you actually need

This is where most A/B testing guides get vague. They mention "statistical significance" and move on. Here is the honest math.

A sample size calculation needs three inputs:

- Baseline conversion rate: your current rate (e.g., 3%)

- Minimum detectable effect (MDE): the smallest improvement worth detecting (e.g., 20% relative, meaning from 3% to 3.6%)

- Significance level: the confidence you want that the result is real, not noise (standard: 95%)

The formula, assuming 80% statistical power:

n ≈ 7.849 × (p₁(1-p₁) + p₂(1-p₂)) / (p₂ - p₁)²

Where p₁ is your baseline rate and p₂ is the improved rate. The constant 7.849 comes from (1.96 + 0.8416)², which encodes 95% significance (two-tailed) and 80% power.

You do not need to memorize this. Use the calculator below, or Evan Miller's calculator which is the industry standard.

The uncomfortable truth about low traffic

Play with the calculator. A site with 100 daily visitors and a 2% conversion rate needs over 190 days to detect a 20% improvement. That is six months for one test.

Booking.com runs over 25,000 experiments a year because they have billions of pageviews. Only 10% of those experiments show a positive result. At Microsoft, one-third of experiments produce positive results, one-third are flat, and one-third are negative.

If your site gets fewer than 5,000 weekly visitors to the page you want to test, standard A/B testing will not produce reliable results in a reasonable timeframe. That does not mean you should stop optimizing. It means you should:

- Test bold changes, not button colors. A completely different headline has a larger effect than a shade of green. Larger effects need fewer visitors to detect.

- Set your MDE to 50% or higher. You are looking for big wins, not 5% improvements.

- Use qualitative research alongside testing. Five user interviews reveal more about why visitors leave than a six-month split test on button text.

When to stop (and why you should not peek)

Evan Miller's 2010 article "How Not to Run an A/B Test" is the most important thing ever written about A/B testing for non-statisticians.

His finding: if you check your test results repeatedly and stop when they first look significant, your actual false positive rate is not 5%. It is 26%.

The reason: statistical significance fluctuates wildly with small samples. Early in a test, random noise can create apparent differences that disappear with more data. If you look 10 times and stop when you first see p < 0.05, you have effectively given yourself 10 chances to get a false positive.

Three rules that prevent this:

1. Pre-calculate your sample size before starting. Use the calculator above. Write down the number. That is when you stop.

2. Run for at least two full weeks. Even if you hit your sample size on day 5. Monday visitors behave differently from Sunday visitors. You need at least one full weekly cycle, ideally two, to smooth out day-of-week effects.

3. Don't look at results until both conditions are met. Sample size reached AND minimum duration passed. This is the hardest rule to follow. It is also the most important.

Is your result real?

Your test ran for two weeks. You hit your sample size. Now you need to know: is the difference between your variants statistically significant, or just noise?

The math is a two-proportion z-test. In plain language: take the conversion rates of both variants, account for the sample sizes, and calculate the probability that a difference this large could happen by random chance.

If the confidence is below 95%, you have two options: keep running the test (more data may push it over), or accept that the difference is too small to detect at your traffic level and test a bolder change.

Convert's platform data shows that 60% of completed A/B tests deliver under 20% lift. Only about 10% of experiments at companies like Booking.com show positive results. Most tests do not produce winners. That is normal. The value is in not deploying changes that seem better but are actually noise.

The privacy question nobody asks

Every "how to A/B test" guide ignores privacy. Here is why it matters.

Enterprise A/B testing tools set cookies. VWO stores variant assignments in cookies with a 100-day lifetime. AB Tasty sets a cookie with a 13-month lifetime. Optimizely uses multiple cookies and localStorage entries. All three transmit variant data to their servers.

Under the ePrivacy Directive Article 5(3), storing information on a user's device requires consent. The CJEU confirmed this in the Planet49 ruling (2019): consent must meet full GDPR standards, even for non-personal data. The EDPB's Guidelines 2/2023 take a technology-agnostic approach that extends beyond cookies to any storage or access on a user's device.

The exception is CNIL's guidance, which explicitly exempts A/B testing from consent if it is first-party only, limited to audience measurement, and users are informed with an opt-out option. France is currently the most permissive EU jurisdiction on this point.

The DIY approach in this guide uses localStorage, which keeps the privacy footprint minimal: the variant string ("control" or "treatment") stays on the visitor's device, is never sent to a third-party vendor, and contains no personal data. Combined with a cookieless analytics tool that does not set cookies or require consent, the entire A/B testing stack operates without triggering a cookie banner.

Google also has SEO rules for A/B testing: use 302 redirects (not 301) for URL-based tests, set rel="canonical" on variant URLs, and never show Googlebot a different version than users see. Running tests too long risks manual action.

What paid tools give you (that this approach does not)

The DIY approach covers simple split tests: two versions of a headline, a CTA button, or a page layout. It is enough for most websites running one or two tests at a time. Paid tools earn their price when you need:

- A visual editor that lets non-developers create variants without touching code

- Multivariate testing with more than two variants across multiple elements

- Automatic traffic allocation that shifts traffic toward the winning variant in real time

- Sequential testing methods that are mathematically safe to peek at

- Team collaboration with approval workflows and shared experiment histories

If you have 100,000+ monthly visitors, a dedicated CRO team, and run multiple concurrent experiments, a paid tool pays for itself. GrowthBook is the best free option at that scale: open source, self-hostable, warehouse-native, and genuinely free with no traffic limits. For everyone else, JavaScript and custom events get you 80% of the value at 0% of the cost.

Frequently asked questions

How much traffic do I need to A/B test? It depends on your conversion rate and the size of the effect you want to detect. A site with a 3% conversion rate needs roughly 28,000 visitors to detect a 20% relative improvement at 95% confidence. Use the calculator above to find your specific number.

Is A/B testing worth it for small websites? Only if you test bold changes. A site with 300 daily visitors cannot reliably detect a 10% improvement. But it can detect a 50% improvement in about 4 weeks. Test entirely different headlines, offers, or page layouts rather than button colors and font sizes.

Does A/B testing affect SEO?

Not if done correctly. Google's official guidance says to use 302 redirects for URL-based variants, set rel="canonical" on variant pages, and avoid showing different content to Googlebot than to users.

Do I need cookie consent for A/B testing? If you use a third-party tool that sets cookies and sends data to vendor servers, yes. If you use client-side localStorage with no vendor involvement, the legal picture is less clear. CNIL explicitly exempts first-party A/B testing. Most other EU DPAs have not ruled specifically on this.

How long should I run an A/B test? A minimum of 7 days (one full business cycle), ideally 14 days. Even if you reach your target sample size sooner, wait for the full duration to account for day-of-week variation and the novelty effect.

What is the difference between A/B testing and multivariate testing? A/B testing compares two versions of a single element (headline A vs. headline B). Multivariate testing varies multiple elements simultaneously (headline × button color × image) and measures how combinations perform. Multivariate testing requires substantially more traffic.

Can I A/B test with Google Analytics? GA4 can measure custom events per variant, but it has no built-in variant assignment or display mechanism. You would need the JavaScript split from this guide plus GA4 custom dimensions to record variant exposure. The 24-48 hour data delay means you cannot monitor tests in real time.

The three-line summary

Assign randomly. Display consistently. Measure with custom events. Everything else is a billing page. Your analytics tool is half the A/B testing stack. If it tracks custom events, you already have everything you need.

Start your free 30-day trial. No credit card required.

Comments

Loading comments...

Leave a comment