Sudden Spike in Direct Traffic: Is It Bots? (2026 Study)

Show article contentsHide article contents

- The data: what "Direct" looks like across our network

- Three things hiding in your Direct bucket

- How bots end up classified as Direct

- Why GA4's filter catches almost none of them

- Dark social moves real humans into Direct too

- Browsers quietly stopped sharing the full referrer

- Diagnosing a spike in 15 minutes

- Is your direct-traffic spike bots? Run the numbers

- What to do once you know

- Frequently asked questions

On the biggest site we monitor, 76% of sessions show as Direct. Not Organic, not Paid, not Social. Direct. And that is what survives after our ingestion-layer bot filtering rejects every automated request it catches. Anything that slips through lands in Direct too. Every spike you investigate in that channel is a spike on top of a pool you already cannot see into.

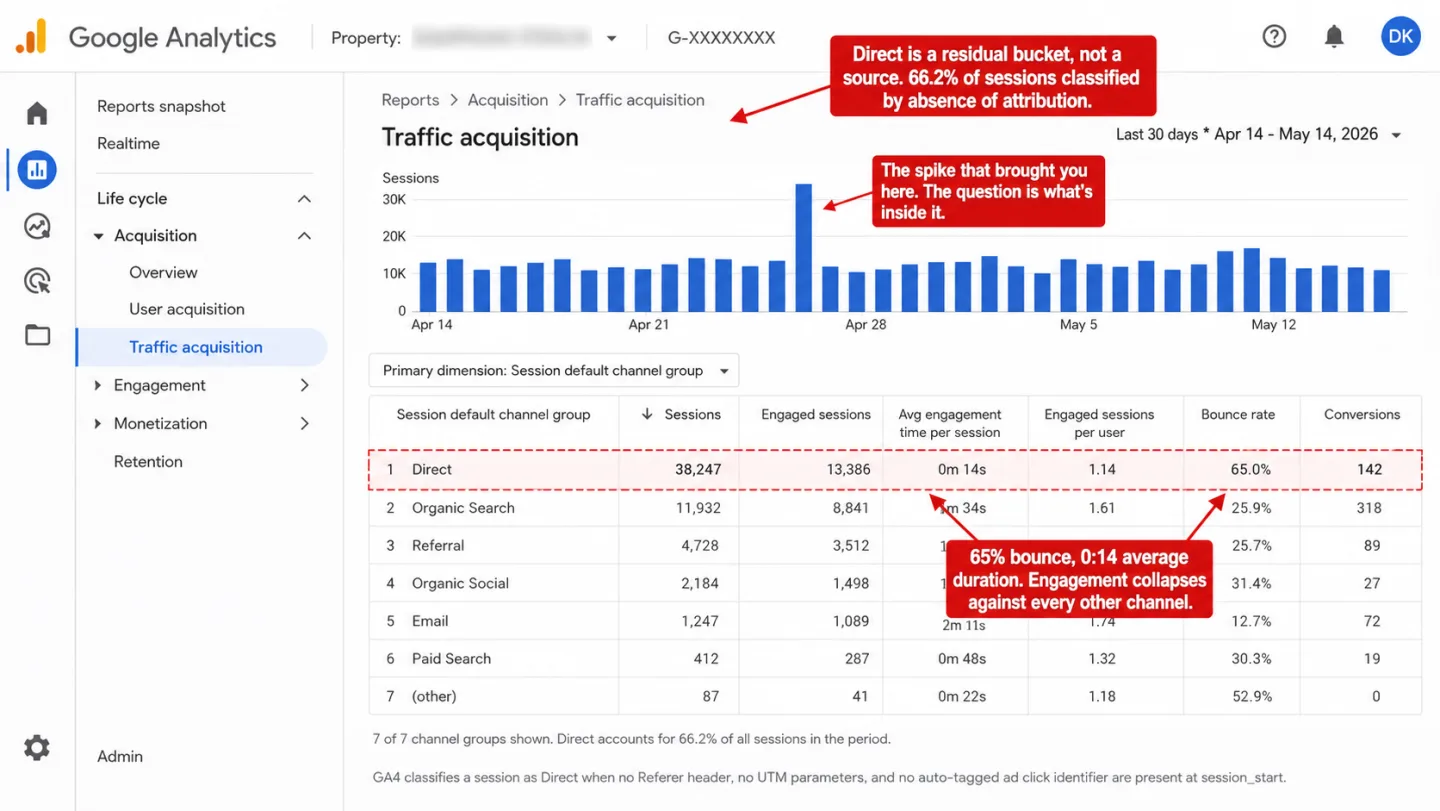

- Across the sites we monitor, 66.2% of sessions classify as Direct. On the largest, it is 76.3%. Direct is not a traffic source. It is the bucket analytics uses for sessions it cannot classify.

- Clickport runs eight ingestion-layer checks that reject bots before they become sessions. GA4 does not. It filters one user-agent list and counts everything else, including headless Chrome on residential IPs, as human.

- SparkToro's 2023 study found 100% of visits from TikTok, Slack, Discord, WhatsApp, and Mastodon lose their referrer. All of it lands in Direct. Most of it is real humans, not bots.

- A bot spike looks different from a dark-social spike. Bots bounce near 100%, cluster in one country, and concentrate on the homepage. Dark social shows normal engagement on deep content pages. The triage takes 15 minutes.

The data: what "Direct" looks like across our network

Across the sites we monitor, 66.2% of all sessions in the last 30 days classify as Direct. On the single largest site, it is 76.3%. Direct is not a traffic source. It is the bucket an analytics tool uses when it cannot attribute a session to anything else.

In the same 30-day window, Clickport's ingestion layer rejected every automated request it caught before any of it became a session. Those requests never contaminated the dashboards. The breakdown by detection method, as a share of total blocks:

Every one of these requests would have landed in the Direct channel if it had made it through. None of them sent a Referer header. None carried UTM parameters. That is how bot traffic enters analytics tools: quietly, with no attribution data, into the one bucket where attribution does not exist.

The same pattern shows up in the surviving direct sessions. On the largest site we monitor, 65% of Direct sessions bounced, 60% had zero duration, and 70% never scrolled past the first screen. A dense layer of low-engagement traffic sits inside a channel that most analysts treat as brand-loyal return visitors typing URLs from memory.

The point is not that our numbers are unusual. The point is that your dashboard is showing you something similar and you cannot tell by looking. A sudden spike in direct traffic is almost never a sudden spike in direct traffic. It is a spike in something that could not be classified, added to a pool of things that could not be classified, rendered as one number.

Three things hiding in your Direct bucket

Direct traffic is not a source. It is an absence of sources. GA4 classifies a session as Direct when three things are missing at the same time: no Referer header, no UTM parameters, and no auto-tagged ad click identifier (gclid, dclid, msclkid). When all three are absent, GA4 sets source = (direct), medium = (none), and the session falls into the Direct channel as documented in Google's Default Channel Group reference.

Three very different populations meet those conditions. They show up in the same bucket and an analyst sees them as one thing.

You cannot fix "direct traffic" as one problem because it is not one problem. A bot spike and a dark-social spike need different responses. One means tighter filtering. The other means an engagement calculation you can trust. A referrer-loss spike means an audit of your URL plumbing. Treating them as the same number is how the wrong thing gets blamed for a week.

Before we get into diagnosing which one you are staring at, here is what each looks like on the inside.

How bots end up classified as Direct

Bots end up in Direct because they do not send a Referer header. The HTTP Referer header is optional, and RFC 7231 specifies that clients send it only when the request was triggered by a prior resource. A bot calling your page directly has no prior resource. No Referer. No attribution.

The tools that bots run on default to this behavior. curl does not send Referer by default. Neither does wget. Python's requests library, Node's axios, Playwright, Puppeteer, and Selenium all launch with no Referer unless the script explicitly sets one. Commercial scraping services (Bright Data, Oxylabs, ScraperAPI, Apify) document Referer as a header the user should add manually to look more natural, which means their default is nothing. A bot that does not bother to spoof a referrer is indistinguishable from a person who typed your URL.

The problem compounds when the bot runs a full browser. Headless Chrome and Playwright execute JavaScript. That means they fire your analytics tracker. A pageview event leaves the browser with document.referrer set to an empty string, arrives at your analytics endpoint, and gets classified as Direct. A scraper that simulates 10 seconds of dwell time produces a Direct session that looks roughly like a human who bounced. At volume, this is your spike.

![A screenshot of Chrome DevTools with the Network panel and Headers tab open for a single article-page request, with four red editorial annotations. The Request Headers section is expanded and red-dashed-bordered, showing Sec-Ch-Ua, Sec-Ch-Ua-Mobile, Sec-Ch-Ua-Platform 'Linux', Sec-Fetch-Site: none, and a User-Agent spoofed as Mozilla/5.0 Linux HeadlessChrome/145.0.0.0 Safari/537.36. A conspicuous gap below the headers shows [MISSING] Referer (no Referer header sent). The General section shows Remote Address 185.220.101.42:443 and Referrer Policy strict-origin-when-cross-origin. The request list on the left shows article-page highlighted in pale red, with gtm.js and g/collect requests visible below. Annotations read 'Looks like real Chrome. The HeadlessChrome substring is the only tell GA4's IAB list can catch,' 'No Referer header. document.referrer arrives empty. The session lands as Direct,' 'Sec-Fetch-Site: none means the request was not initiated by a prior page. Bot signature, classified as Direct.' A footnote notes GA4's IAB filter catches the HeadlessChrome substring while real-world bots replace it, and that Remote Address 185.220.101.42 is in a known datacenter range not seen by GA4.](/blog-assets/direct-traffic-spike-bots-devtools-bot-headers.webp)

Residential proxies are the structural change that broke old bot detection. IP blocklists only catch traffic from known datacenter ASNs. Residential proxy networks recruit exit nodes through bundled SDKs and free VPN apps, then rent them out at premium rates precisely because the IPs carry no negative reputation signal. Academic measurement work on these networks finds that detection now requires behavioral signals, not IP reputation, because the exit nodes are indistinguishable from ordinary residential broadband.

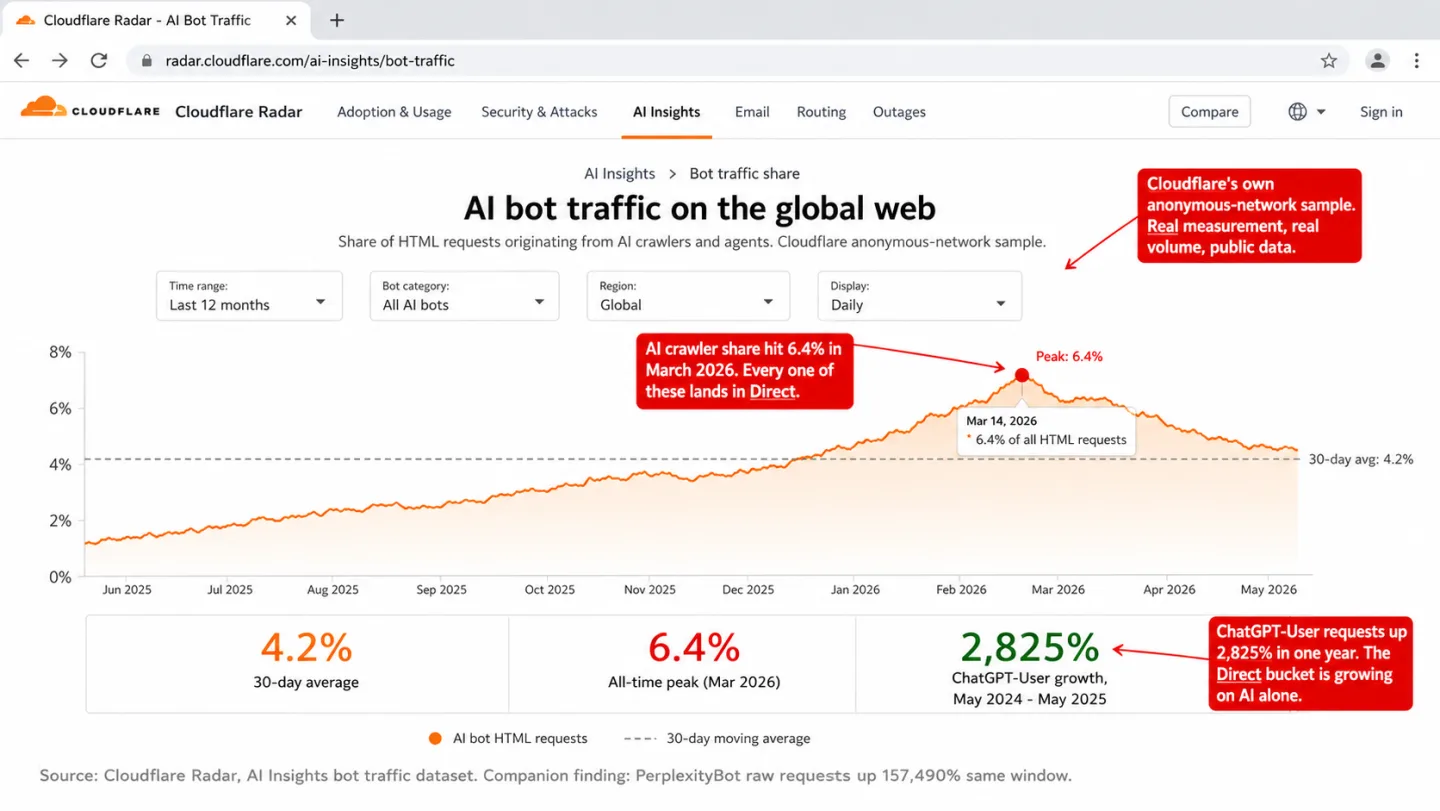

AI crawlers deserve a line of their own. Cloudflare tracked ChatGPT-User requests up 2,825% from May 2024 to May 2025, and PerplexityBot raw requests up 157,490% over the same window. Barracuda's research documented one web application receiving 9.7 million AI scraper requests in 30 days. Many of those crawlers execute JavaScript now, which means they do fire your tracker and land in Direct.

Cloudflare CEO Matthew Prince put the trajectory plainly at SXSW in March 2026: "Before the generative AI era, the internet was only about 20% bot traffic... in 2027, the amount of bot traffic online will exceed the amount of human traffic." If that trajectory holds, your Direct bucket will grow along with it, and the question "is my direct spike bots" will become the default question for everyone.

Why GA4's filter catches almost none of them

GA4 filters bot traffic with a single mechanism: the International Spiders and Bots List maintained by the Interactive Advertising Bureau. Google's documentation states plainly that "known bot and spider traffic is identified using a combination of Google research and the International Spiders and Bots List." The filter is automatic, non-configurable, and offers zero visibility into what was excluded.

The list only catches bots that identify themselves. A crawler whose user-agent says Googlebot/2.1 gets filtered. A scraper whose user-agent says Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/145.0.0.0 Safari/537.36 does not. The filter does no behavioral analysis, no IP reputation check, no JavaScript environment fingerprinting.

This is not a theoretical gap. Plausible ran a controlled experiment in May 2025 using three different bot setups against a GA4 property. GA4 recorded 22 pageviews from a script using the user-agent PostmanRuntime/7.43.4. It recorded 40 pageviews from Puppeteer with a spoofed browser user-agent. It recorded 17 pageviews from traffic originating on datacenter IPs. All of them survived GA4's 48-hour processing window and appeared in standard reports as real sessions.

Our own 30-day data makes the point in scale. 34.2% of the bot requests we blocked tripped the headless Chrome signal (swiftshader or llvmpipe WebGL renderer, which real consumer GPUs never return). Another 28.6% came from known datacenter IP ranges. GA4's IAB list catches neither of these categories. On the same traffic, roughly two-thirds of what we blocked would pass GA4's filter unchanged and land as sessions, most of them in the Direct channel. We cover GA4's bot detection limits in detail in a separate controlled test.

☑ Bingbot (self-identifies)

☑ GPTBot (self-identifies)

☑ ClaudeBot (self-identifies)

☑ AhrefsBot (self-identifies)

☑ Well-behaved crawlers

☐ Playwright, Puppeteer, Selenium

☐ Residential proxy bots

☐ PostmanRuntime hits

☐ Datacenter IP traffic

☐ Click fraud bots

The asymmetry matters for the question you are here to answer. If GA4 is showing you a direct-traffic spike and you suspect bots, GA4 itself cannot help you confirm it. It does not tell you what it filtered out, it does not show engagement fingerprints per session, and it does not expose IP or browser-environment signals. You have to diagnose the spike with information GA4 never gathered.

Simo Ahava, who writes the most widely-read GA4 implementation guide on the internet, was making this point as far back as 2019, when he wrote that Google Analytics bot filtering "is far from comprehensive enough to tackle all instances of bot traffic that might enter the site." The list of missed categories has grown since.

Dark social moves real humans into Direct too

Not every direct-traffic spike is bots. Some of it is the opposite problem: real humans on real browsers whose referrer information vanishes between the share and the click. The industry calls this dark social, a term Alexis Madrigal coined in a 2012 Atlantic piece after Chartbeat flagged how much Atlantic traffic arrived without any referrer.

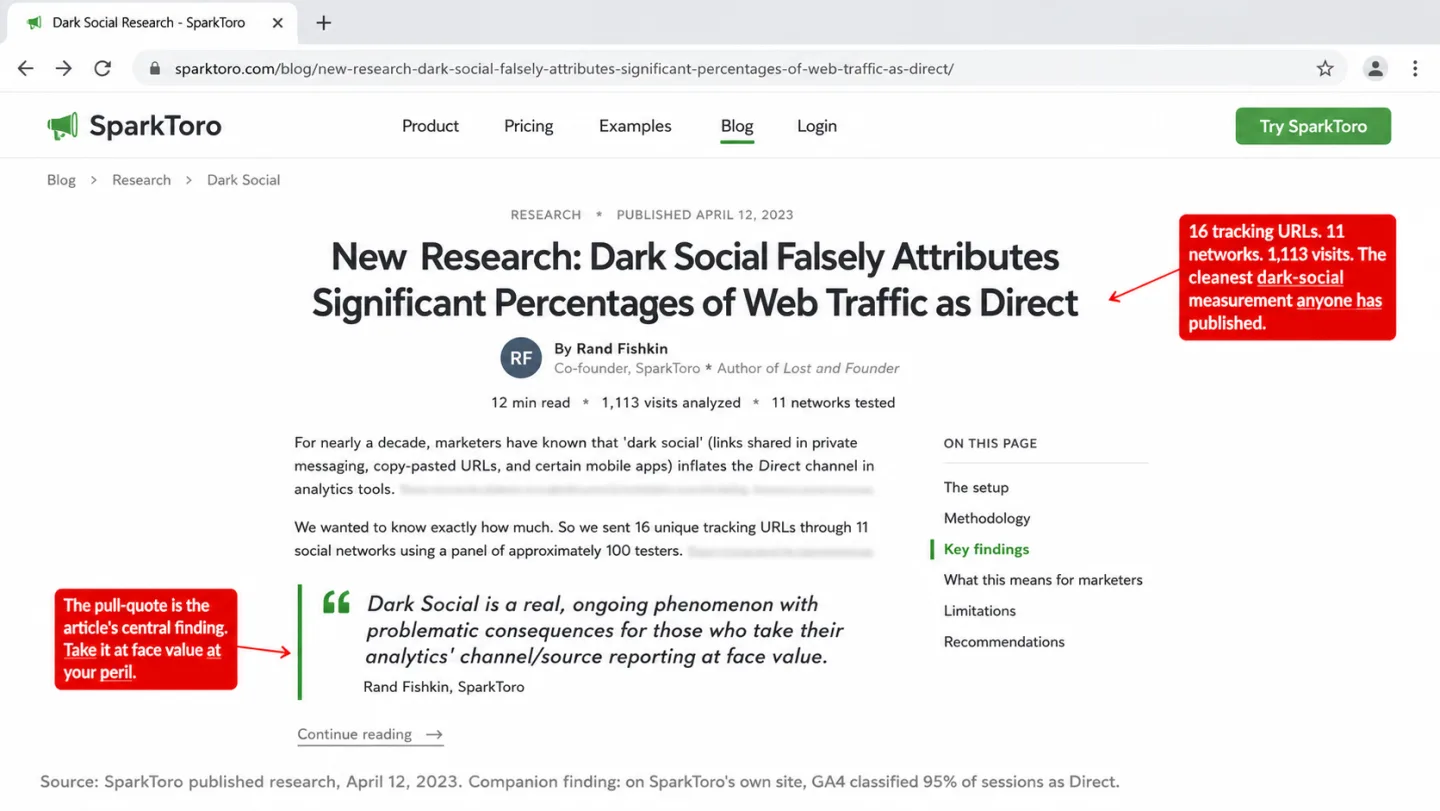

The most rigorous modern measurement came from SparkToro in April 2023. Rand Fishkin and collaborators sent 16 unique tracking URLs through 11 social networks using a panel of roughly 100 testers, then compared GA4's attribution against the known send channels. The results are the cleanest numbers anyone has published on this.

Fishkin summed it up with the finding that matters: "Dark Social is a real, ongoing phenomenon with problematic consequences for those who take their analytics' channel/source reporting at face value." On SparkToro's own site, GA4 was classifying 95% of sessions as Direct when the actual attribution, measured through tracking URLs, was nothing close to that.

The mechanism is the same every time. A user taps a link inside an in-app browser (LinkedIn, Instagram, TikTok, WhatsApp, Slack, Discord) or pastes a copied URL into a new tab. The originating context has no web page. There is no Referer to send. The destination site receives an HTTP request with no referrer header, sees no UTM in the URL, and attributes the session to Direct.

Apple's platform behavior adds another layer. Instagram, Facebook, and LinkedIn all open external links inside their own WKWebView-based in-app browser, which does not share session state with Safari. There is no prior web page for the browser to treat as a referring document, so the Referer header goes unset and document.referrer arrives empty on the tracker. A mobile user clicking a link in a LinkedIn post goes through a sequence that looks nothing like a desktop click-and-browse, and by the time the tracker fires, the attribution chain is gone.

A dark-social spike on a Monday morning is often a LinkedIn post that went semi-viral over the weekend. The engagement metrics look right. Real users scroll, click internal links, sometimes convert. If you look only at the channel attribution you see a bot-style spike. If you look at engagement, it is the opposite of a bot pattern. That distinction is the first thing to check when you are diagnosing.

Browsers quietly stopped sharing the full referrer

Modern browsers made a default change that affects every site on the web, and it is worth knowing the exact year it happened. Chrome 85 (August 2020) and Firefox 87 (March 2021) both switched their default Referrer-Policy from no-referrer-when-downgrade to strict-origin-when-cross-origin. Safari had been stripping referrers to classified trackers since ITP 2.0 in 2018, and now downgrades all third-party referrers to origin by default.

The practical effect is this: when a user clicks from https://example.com/article/some-path to your site, document.referrer reads https://example.com/. The path is gone. The query string is gone. Attribution at the domain level still works, which is why this change did not destroy analytics entirely. But any redirect intermediary in the chain collapses the referrer further. Click through a t.co or bit.ly link and your analytics sees t.co/ or bit.ly/, not the source that generated the click. Click through an ad platform's redirect and you see the ad platform, not the campaign.

Privacy browsers go further. Brave strips referrers to origin on all cross-site navigations by default, and the Brave team announced 101 million monthly active users as of September 2025. Firefox 93+ in Strict mode overrides permissive policies sites try to set. DuckDuckGo's browser trims third-party referrers to the hostname. Cumulatively, a measurable share of real human traffic arrives with no referrer and no UTM, for reasons that have nothing to do with bots or dark social. The HTTP Archive's Web Almanac 2024 Privacy chapter found 33.87% of desktop pages now deploy some form of explicit Referrer-Policy, with strict-origin-when-cross-origin as the most common explicit value.

The third contaminant hiding in Direct is this structural drift. Every year the share of sessions arriving with stripped or origin-only referrers grows. If you compare your direct share now against your direct share three years ago on the same traffic mix, you will likely see it climbed on its own, without anything changing about your campaigns.

Diagnosing a spike in 15 minutes

When the spike hits, the triage order that saves time is: rule out a legitimate cause first, rule out broken tracking second, then look at bot signals. If you skip the first two you will spend an afternoon chasing bots that were never there. A Direct spike is one specific symptom of the larger question is my traffic real or bots, and the diagnostic steps below overlap heavily with the general checklist for audit-at-a-glance bot contamination.

1. Did anything happen? A press mention, a newsletter send, a LinkedIn post that picked up, a shoutout in a podcast. Check your own calendar for the spike date. Check your content's publish dates. If the spike aligns with something you did, it is almost certainly dark social, not bots. Real surges usually move other channels too. A spike that moves only Direct with everything else flat is more suspicious than a spike where Organic Search, Referral, and Social all tick up together.

2. Did your site change? A deploy on the spike date, a CMS migration, a GTM container publish, a new redirect rule, a cookie banner update. Any of these can break referrer handling for a subset of users. GA4 Admin > Property Change History and your GTM version log will tell you. HTTPS-to-HTTP hops are the classic case, and a recent CMP reload bug on consent banner platforms has been a recurring driver of direct inflation for European sites.

3. What does engagement look like? This is the fastest single signal. Filter your direct channel to the spike period and read the engagement metrics. A case study from Capacity Interactive documented a Chicago-based client where Ashburn, Virginia appeared as the number two traffic source with 1.5% engagement versus a 57% site average. Classic datacenter IP pattern. If your spike has sub-5% engagement rate or sub-5-second average sessions, it is bots. If it has normal engagement, it is real humans and you should stop looking for bots.

The country check deserves a specific note. On the largest site we monitor, 48.6% of all Direct sessions originate from China, and that share is roughly consistent with what survives our bot filtering. When a spike lands in a country outside your target market with flat 24-hour distribution, you are looking at scraping infrastructure. When it lands concentrated in a country that matches where your audience actually lives, with a circadian curve, you are looking at humans.

The hour-of-day check is the one most analysts skip. Integral Ad Science's research on bot traffic timing documents that human traffic drops sharply from 10pm to 3am local time, while bot traffic stays consistent across all 24 hours. A five-minute chart in your analytics tool is often decisive on its own. We built the Clickport hourly view specifically to make this visible at a glance.

Landing-page distribution is the fourth non-negotiable check. Real dark-social spikes hit the piece of content that was shared, which is usually a deep URL: a specific blog post, a product page, a pricing page. Bot spikes hit the homepage, enumerate URLs, or request pages that do not exist. Paul Conroy's 2021 diagnostic piece put it well: "Direct is Analytics' catch-all way of saying 'I've no idea.'"

Is your direct-traffic spike bots? Run the numbers

The signals compose. Any one of them can be noisy, and a real spike almost never hits exactly one. Plug your numbers in below to see which way the weight of evidence falls.

Three tiers of output, each suggesting a different next step. A score above 70 means the spike almost certainly includes bots and you need tighter filtering. A score between 40 and 70 means the signals are mixed and you should look at a sample of individual sessions to decide. A score below 40 means the signals point to legitimate dark social or referrer loss, and you are likely chasing a ghost.

One note on thresholds: they are calibrated for a typical content site. A SaaS dashboard with a natural login-and-stay pattern will skew your bounce rate down. A high-bounce blog with casual readers will skew it up. Treat the calculator as a triage, not a verdict. If it scores 65, look at ten random sessions in the spike and you will know within a minute.

What to do once you know

Three different problems, three different fixes.

When it is bots. The answer is filtering at ingestion, not cleaning up in the dashboard. GA4 gives you two usable levers: the unwanted-referral list and IP-range exclusions. Both help with specific known sources and neither touches behavioral bots. If bots are a meaningful share of your traffic, the honest next step is a tool that applies behavioral and environment-level detection at ingest. Clickport runs eight detection checks before a request becomes a session, with the full bot-detection framework documented here. The Bot Center surfaces exactly what was blocked, by method and by source, which is the transparency GA4 does not offer.

Beyond the automatic filtering, the two moves that compound over time are engagement-gated reporting and manual session flagging. Engagement-gated reporting means your primary metric is not pageviews but sessions with real interaction: scroll depth above zero, time on page above a threshold, at least one click. This is the core of Clickport's engagement-first dashboard and it makes bot contamination visible on contact instead of hidden in the averages. Manual flagging handles the residual: when you spot a suspicious session in the sessions panel, one click marks it and it never shows in your dashboards again.

When it is dark social. The fix is not technical, it is procedural. Tag every link you share with UTM parameters before you publish. The standard for social-shared links is ?utm_source={platform}&utm_medium=social&utm_campaign={slug}. UTMs survive in-app browsers because they travel in the URL path, not in the HTTP header. Once tagged, Slack, WhatsApp, TikTok, and Discord traffic all land in a Social channel with the correct source. The spike becomes legible.

For content that is already out in the world, the only useful move is to shift your measurement frame. Pull engagement metrics for the direct channel and compare them against your site-wide baseline. If they match or exceed the baseline, you are measuring real humans, just with missing attribution. The number is real. The label is wrong. Sometimes that is enough to act on.

When it is referrer loss. This one is almost always self-inflicted. A Referrer-Policy: no-referrer header you added site-wide. A rel="noreferrer" that your CMS adds automatically to all external links. A CMP that reloads the page after consent and can drop the UTM parameters from the URL. A URL shortener in your email template that collapses the chain. Audit the obvious surfaces first: cookie banner configuration, email template link handling, social sharing buttons, anything that rewrites outgoing links. The common culprits are documented well by OneFurther, and the fix is usually a one-line config change.

If you want to see what your analytics look like without the bot layer at all, the 30-day free Clickport trial gets you set up in about two minutes, no credit card. The onboarding puts the Bot Center in front of you on day one, so you can see the numbers before they have been smoothed into dashboards.

Frequently asked questions

What is "(direct) / (none)" in GA4?

It is the label GA4 applies when a session has no referrer, no UTM parameters, and no auto-tagged ad identifier at the moment the session_start event fires. Google's own documentation lists missing UTM tags, URL shorteners, offline documents, direct URL entry, and ad-blocker interference as common causes. Bots are not named in that list, but they produce the same signature and land in the same bucket.

Does GA4 automatically filter bot traffic?

Partially. GA4 applies the IAB/ABC International Spiders and Bots List automatically, and you cannot turn it off or see what it caught. The list only filters bots that identify themselves by user-agent. Spoofed browsers, headless Chrome, Puppeteer, and Playwright all pass through. Plausible's May 2025 test confirmed GA4 records bot traffic from PostmanRuntime scripts, Puppeteer with spoofed UAs, and datacenter IPs as normal sessions.

Why is direct traffic from China or Singapore spiking?

A large-scale AI-scraping wave that began in October 2025 originates mostly from Alibaba (AS45102) and Baidu (AS55967) infrastructure. The scrapers use real browser user-agents, execute JavaScript, and pass no Referer. That combination lands them in Direct. On our network, China accounts for 46.8% of all Direct sessions on the largest site we monitor.

Is a high direct-traffic share always bad?

No. A well-branded site with returning users will always see high Direct. The benchmark most practitioners use is 15-25% for ecommerce and 20-35% for content, but those numbers are averages across audiences and do not apply one-to-one. What matters is whether the engagement metrics inside the Direct channel match your site-wide baseline. If they do, Direct is healthy. If they collapse, Direct is contaminated.

How do I tell dark social from bots in ten seconds?

Engagement metrics. Real humans scroll, stay, and sometimes convert. Bots do none of that. Filter your Direct spike to the period in question and read average session duration, bounce rate, and scroll depth. If they match your site baseline, it is dark social. If they collapse, it is bots.

Does iCloud Private Relay cause direct traffic spikes?

Not through the referrer. Private Relay operates at the IP layer and does not strip the Referer header. It masks the user's IP and may cause geolocation misattribution, but the session's referrer still flows through. Safari's ITP does strip referrers to origin on third-party navigations, and that is a larger structural contributor to Direct growth than Private Relay specifically.

What does Clickport do that GA4 does not?

Eight detection checks at ingestion, a Bot Center that shows what was blocked by method and source, per-session engagement signals (scroll, duration, behavior score), manual session flagging that removes bots from every panel retroactively, and a channel classifier that separates AI Search from Direct so you can see AI referral traffic as its own category. GA4 gives you a single user-agent list with zero visibility.

Direct traffic is a confession, not a number. It is your analytics tool admitting it does not know where the traffic came from. When you stop reading it as a source and start reading it as a residual, the diagnosis gets faster and the dashboard starts telling you something useful. Everything you measure on top of an unclean Direct pool inherits its noise, which is why the question "is this spike bots" is worth the fifteen minutes.

Clickport is built for teams that want to see what is in the bucket, not smooth it into an average. Start with the free 30-day trial, no credit card required. EUR 9 a month covers 10k monthly pageviews, and you will see your Bot Center populated within a day of installing the tracker.

Comments

Loading comments...

Leave a comment